Understanding misinformation: A short guide to why people share false info online and strategies to address it

I. What is misinformation?

Imagine misinformation as an intricate puzzle, often obscuring the boundary between fact and fiction, making it difficult to recognize whether information is accurate (5, 8, 9).

Let’s journey together into the world of misinformation to understand this complicated landscape and equip ourselves with tools to identify and counter it.

Misinformation is false or inaccurate information that is widely shared, often unintentionally (3, 6, 7, 9). It spreads through mediums like social media, word of mouth, or even the news, misleading people into believing things that might be untrue (6, 7, 9).

Misinformation is a general term used to describe several types of false content (5), including:

- Disinformation is the intentional presentation of blatantly untrue stories as if they are real (6). Therefore, the key distinction between disinformation and misinformation is the intent behind the messaging: deliberate versus unintentional sharing of false information.

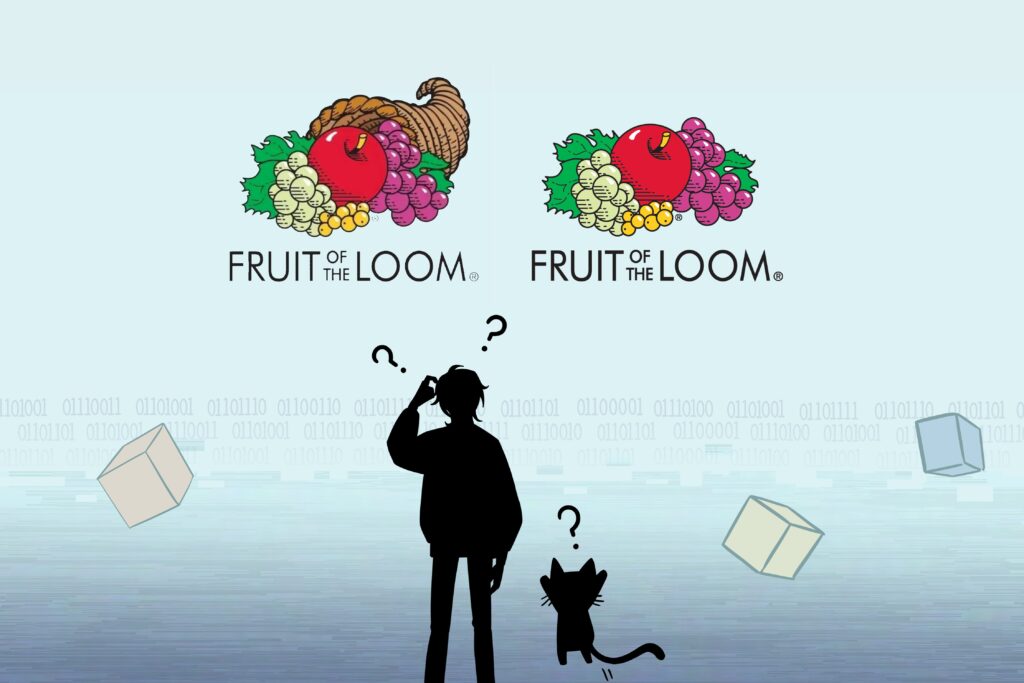

- Conspiracy theories suggest that certain events happen because groups of people are secretly working together towards specific goals, often breaking the law (1, 3, 4, 5, 6, 10).

- Superstitions are beliefs or practices that are often rooted in cultural or traditional beliefs rather than empirical evidence or logical reasoning (4).

There are more types of misinformation, but these examples are some that you will most likely encounter.

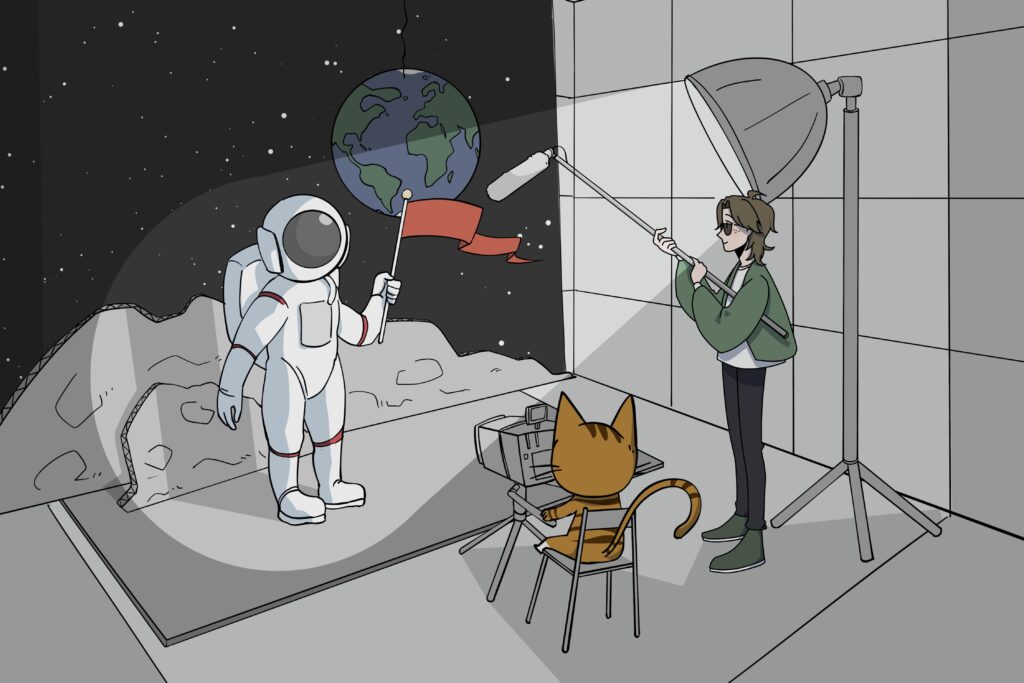

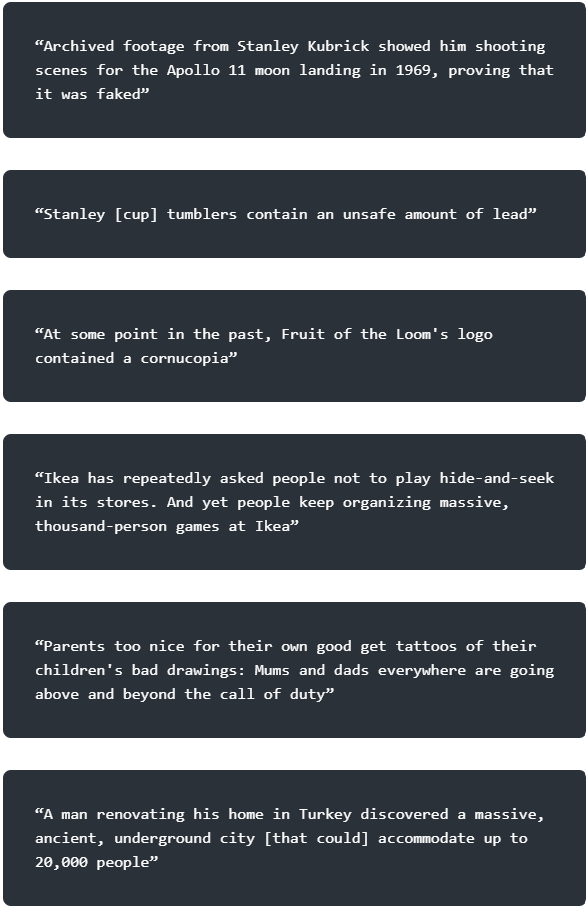

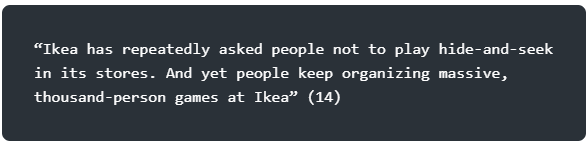

Quiz: Identifying misinformation

Read the following claims and ask yourself whether you would consider sharing them on social media if you saw them cross your screen:

Many people would be open to sharing at least one of these claims. And to be fair, half of them are true. Read on, and we’ll reveal which ones are accurate — and give you some advice along the way about how to separate fact or fiction.

Note

Even though some studies show that people who think analytically are less likely to fall for false headlines, the link between critical thinking and skepticism about certain beliefs isn’t straightforward (2, 6, 7). At times, we all share information that ends up being false or misleading. Past work has shown that people still share info that they later recognize as inaccurate because they aren’t thinking about accuracy when they press the button to publish. We’ll explore more about this as we go along.

II. Who is at the greatest risk for falling for misinformation?

Those most at risk of falling for misinformation tend to be reflexive rather than reflective thinkers. Reflexive thinkers often accept information without question, relying heavily on their intuition. In contrast, reflective thinkers tend to question their own intuitions and actively seek evidence before forming beliefs (7)

Despite the evidence that some people are more prone to accept misinformation as factual, all of us can fall victim to misinformation, as human psychology is imperfect.

We will cover several inoculation strategies later, but for now, let’s focus on some digital literacy tips. To begin, we’ll be describing the SIFT method (18), which stands for stop and think, investigate the source, find other coverage, and trace claims and quotes.

The SIFT Method

- Stop and think: Consider the website and its reputation, plus your own purpose, feelings, and cognitive biases.

- Investigate the source: Ask yourself what is it, what you can find out about it, who is the author, and is it worth your time.

- Find other coverage: Consider whether other coverage of the same information is similar, if better or more-trusted sources are available, and whether experts agree.

- Trace claims and quotes: Explore whether the original source and context has been accurately presented.

III. Where is misinformation?

Social media has created a new environment for spreading misinformation (6, 7). It is a productive medium for the spread of false information for several reasons (6).

Firstly, humans, both online and in person, tend to seek out others who confirm their own beliefs and form groups with others who think the same way (3, 4). This can create echo chambers where people only hear what they already believe, reinforcing their beliefs and creating opportunities to share information without thinking too critically, resulting in confirmation biases (3, 4, 5). Also consider that social media platforms utilize powerful algorithms that learn your preferences, interests, and tendencies — and then suggest content that is likely to agree with you.

Secondly, there are no traditional peer-reviewers like journalists, editors, or experts on social media to check if information is true before it is shared. Inaccurate information can go straight from the writer to the entire internet without first being fact-checked (3).

Thirdly, headlines that make people feel strong emotions or seem important are good at grabbing attention, even if they’re untrue (7, 8). Emotionally charged topics make it hard for people to know what’s true and what’s not, especially when they’re deciding what to share (8). Some people tend to embrace and share false information because it reinforces their sense of self or group identity (1, 2, 5, 7).

Occasionally, false information gets shared by mistake, even by well-informed people (9). They might be distracted or not focused on whether the information is true or not (5, 7, 9). As mentioned before, when we find ourselves sharing information in a knee-jerk way, it is often a result of reflexive, intuitive thinking (5, 7). To counteract these gut reactions, digital literacy skills, such as the SIFT Method, can help you think critically about whether information is true or false.

Additionally, social media websites can implement prompts for accuracy on their platforms as a strategy to help reduce reflexive thinking and the sharing of misinformation (5, 6, 7, 8). For example, when a news story or headline is shared on X or Facebook, a prompt for accuracy would remind the reader to consider the truthfulness and accuracy of what they are about to read (8).

IV. Why is misinformation dangerous?

Misinformation presents considerable issues as it can oversimplify complex topics and distort reality (1, 8). Incorrect stories and misinformation often make complicated events seem easy to understand, thereby increasing certainty in uncertain circumstances (3). Also, false news spreads faster and more extensively than accurate information, making it challenging to mitigate any damage caused (7, 8, 9).

Repeatedly interacting with false information may increase the likelihood of believing future false information, as repetition makes processing information easier and faster, making us less critical and more accepting of misinformation (7, 8, 9). This is called the illusory truth effect (7): even when we’re told news is false, we might still believe the misinformation because we’ve heard it so much.

For example, Napoleon Bonaparte is commonly portrayed as being short, with a reported height of 5 feet 2 according to his death certificate, which was recorded in traditional French units before the adoption of the metric system (17). At first glance, 5 feet 2 might seem short, but when converted to modern measurements, it equals approximately 5 feet 6.5 inches or 168/9 centimeters, which is not far from today’s global average of 5 foot 9 inches for men (17).

The example of Napoleon’s height highlights how false information can become embedded in popular culture, but with a bit of critical thinking and digital literacy skills, one can confirm the truth behind the myth.

Overall, misinformation is a topic that requires careful thought and education because it can alter perceptions and propagate fabrications. With deliberate effort, individuals can elevate their critical thinking skills and in turn challenge their own or others’ faulty or reflexive thinking (7).

Let’s move on to various cognitive tools you can build to counter misinformation.

V. Tools and strategies to counter misinformation

The tools outlined below serve as crucial defenses against misinformation, meaning that people who possess these qualities are better equipped to recognize and reject false or misleading information. People who use these tools are more likely to critically evaluate information, rely on evidence and logic, and be skeptical of claims that don’t stand up to scrutiny (4). In essence, the tools act as protective factors against being misled by misinformation.

Cognitive sophistication and reasoning abilities

Skills that help to reduce susceptibility to fake/pseudo news. This includes skills like critical thinking, logical reasoning, and problem solving (4, 5). When seeing fake news, people use critical thinking and logic to question the source and evidence. By analyzing the lack of supporting studies and listening to professionals, they can reject false claims.

Cognitive reflection

The ability to stop and reflect on your own thinking processes. Cognitive reflection involves the ability to question your assumptions, consider different perspectives, and avoid jumping to conclusions (4, 6, 7).

Example: Before making a significant purchase, such as a car or a home, you might engage in cognitive reflection by considering your long-term financial goals, budget constraints, and potential consequences of the purchase.

People using cognitive reflection may reflect on whether the purchase aligns with their values and priorities, rather than making an impulsive decision based solely on immediate desires.

Basic science knowledge

Having a good understanding of fundamental scientific concepts and principles. Basic science knowledge helps people evaluate information and claims based on scientific evidence and logic. Those with higher scientific knowledge are better equipped to discern false information, although it does not always lead to the sharing of true information (4, 5).

Numeracy

Being comfortable with numbers and being able to understand and interpret quantitative information helps people assess statistical claims and arguments based on data (4).

Skepticism towards pseudo-science, conspiracy theories, and buzzwords

Having a healthy dose of doubt or skepticism towards ideas or claims that lack scientific evidence or logical support (1). This involves questioning the validity of pseudo-scientific claims, conspiracy theories, and trendy or catchy phrases that may be misleading (1, 2, 4, 5, 7).

Discernment

The extent to which a person can distinguish between true and false information based on their own judgments (5, 6).

Acknowledging bias

Many false headlines are shared reflexively when they support or refute one’s beliefs, and may be related to political, religious, health, or medical preferences (1, 2, 5, 7). Being aware of this and asking yourself if this is accurate may help reduce the reactive tendency to share misleading information (8).

VI. Conclusion

Misinformation is a challenge we all face in the digital age. By being aware, fact-checking, and promoting accurate information, we can navigate through the maze of misinformation and contribute to a more informed and connected world (5, 6, 8).

Many of the tips shared in this guide involve improving your own digital literacy skills. It is not enough to know how to navigate the internet, you must also carefully consider the information that you come across. If there is one takeaway, consider the accuracy of what you are reading before you share — and if you are unsure of its truthfulness, then seek out the source of what you are reading and discuss the findings with people you trust.

Finally, here are the answers to our quiz about newspaper headlines. Whether you performed better or worse than your predicted outcome, it’s not always easy to distinguish true from false information. Keep practicing and keep thinking with a critical mind!

True headlines

We would like to acknowledge the guidance received from Dr. Gordon Pennycook and Dr. R. Nicholas Carleton.

CIPHER is currently being supported through the Public Health Agency of Canada’s investment Supporting the Mental Health of Those Most Affected By the COVID-19 Pandemic. The views expressed herein do not necessarily represent the views of the Public Health Agency of Canada.

VII. References

- Bavel, J. J. V., Baicker, K., Boggio, P. S., Capraro, V., Cichocka, A., Cikara, M., Crockett, M. J., Crum, A. J., Douglas, K. M., Druckman, J. N., Drury, J., Dube, O., Ellemers, N., Finkel, E. J., Fowler, J. H., Gelfand, M., Han, S., Haslam, S. A., Jetten, J., … Willer, R. (2020). Using social and behavioural science to support COVID-19 pandemic response. Nature Human Behaviour, 4(5), 460–471. doi.org/10.1038/s41562-020-0884-z

- Browne, M., Thomson, P., Rockloff, M. J., & Pennycook, G. (2015). Going against the herd: Psychological and cultural factors underlying the “vaccination confidence gap.” PloS One, 10(9), e0132562–e0132562. doi.org/10.1371/journal.pone.0132562

- Brugnoli, E., Cinelli, M., Quattrociocchi, W., & Scala, A. (2019). Recursive patterns in online echo chambers. Scientific Reports, 9(1), 20118–18. doi.org/10.1038/s41598-019-56191-7

- Pennycook, G., McPhetres, J., Bago, B., & Rand, D. G. (2022). Beliefs about COVID-19 in Canada, the United Kingdom, and the United States: A novel test of political polarization and motivated reasoning. Personality & Social Psychology Bulletin, 48(5), 750–765. doi.org/10.1177/01461672211023652

- Pennycook, G., McPhetres, J., Zhang, Y., Lu, J. G., & Rand, D. G. (2020). Fighting COVID-19 misinformation on social media: Experimental evidence for a scalable accuracy-nudge intervention. Psychological Science, 31(7), 770–780. doi.org/10.1177/0956797620939054

- Pennycook, G., & Rand, D. G. (2019). Fighting misinformation on social media using crowdsourced judgments of news source quality. Proceedings of the National Academy of Sciences – PNAS, 116(7), 2521–2526. doi.org/10.1073/pnas.1806781116

- Pennycook, G., & Rand, D. G. (2020). Who falls for fake news? The roles of bullshit receptivity, overclaiming, familiarity, and analytic thinking. Journal of Personality, 88(2), 185–200. doi.org/10.1111/jopy.12476

- Pennycook, G., & Rand, D. G. (2022). Accuracy prompts are a replicable and generalizable approach for reducing the spread of misinformation. Nature Communications, 13(1), 2333–2333. doi.org/10.1038/s41467-022-30073-5

- Ziv Epstein, Adam J. Berinsky, Rocky Cole, Andrew Gully, Gordon Pennycook, & David G. Rand. (2021). Developing an accuracy-prompt toolkit to reduce COVID-19 misinformation online. Harvard Kennedy School Misinformation Review., 2(3). doi.org/10.37016/mr-2020-71

- Swami, V., Voracek, M., Stieger, S., Tran, U. S., & Furnham, A. (2014). Analytic thinking reduces belief in conspiracy theories. Cognition, 133(3), 572–585. doi.org/10.1016/j.cognition.2014.08.006

- Snopes. (n.d.). Stanley Kubrick moon landing conspiracy theories. Retrieved from snopes.com/fact-check/stanley-kubrick-moon-landing

- Snopes. (n.d.). Stanley Cup lead. Retrieved from snopes.com/fact-check/stanley-cup-lead

- Snopes. (n.d.). Fruit of the Loom cornucopia. Retrieved from snopes.com/fact-check/fruit-of-the-loom-cornucopia

- Fast Company. (2019, February 6). Ikea politely asks that you stop playing giant games of hide-and-seek in its stores. Retrieved from fastcompany.com/90399077/ikea-politely-asks-that-you-stop-playing-giant-games-of-hide-and-seek-in-its-stores

- The Independent. (2016, October 18). Parents are getting tattoos of their children’s drawings. Retrieved from independent.co.uk/life-style/health-and-families/parents-tattoo-children-drawings-a7371826.html

- Snopes. (n.d.). Man Unearths Underground City in Turkey. Retrieved March 7, 2024, from snopes.com/fact-check/man-unearths-underground-city-turkey

- National Gallery of Victoria. (n.d.). Napoleon facts and figures. Retrieved from ngv.vic.gov.au/napoleon/facts-and-figures/did-you-know.html

- UAB News. (n.d.). A media literacy expert shares three tips to dodge disinformation. Retrieved from uab.edu/news/youcanuse/item/13859-a-media-literacy-expert-shares-three-tips-to-dodge-disinformation